Why Multi-Cloud DR Fails Before You Even Need It

Multi-cloud disaster recovery has gotten complicated with all the vendor promises and architecture diagrams flying around. As someone who spent three years watching teams build DR plans that looked airtight on a spreadsheet, I learned everything there is to know about what actually breaks at 2 a.m. on a Tuesday. Today, I will share it all with you.

Here’s the brutal part nobody says out loud: most teams wire up cross-cloud failover once, feel good about it, and never touch it again. Then something breaks. Not the thing they planned for. Something else. Something that exposes gaps nobody documented — at least not until customers are screaming and Slack is on fire.

The Three Failure Modes That Live in the Gaps

Data replication lag is the first trap. You’re copying from AWS to GCP, the dashboard shows green, and then your primary database goes dark. Turns out the replica was 40 minutes behind — your ETL job spiked on a Tuesday afternoon and nobody was watching lag. Your RTO was supposed to be 15 minutes. It wasn’t.

DNS failover misconfiguration is the second. Health checks point at both clouds. Fine. But your TTL is sitting at 3,600 seconds — one full hour — because someone copy-pasted from an old internal doc. Primary endpoint dies at 11 p.m. Clients hammer the dead IP until midnight. Traffic never moves. That’s what makes this failure mode so infuriating to us operations folks — it’s entirely invisible until the moment it isn’t.

IAM permission gaps between clouds is the third. And honestly, it’s the one that makes grown engineers swear at their monitors. Compute fails over fine. Storage fails over fine. But your app needs secrets from AWS Secrets Manager, and the GCP service account never got those permissions. Nobody tested that path. App boots, can’t authenticate, just sits there broken while the on-call engineer stares at logs in disbelief.

I’ve watched each of these happen in production. Real outages. Real consequences. Don’t make my mistake.

Decide What Actually Needs to Fail Over

Before designing anything, you have to be ruthlessly honest — at least if you want an architecture that actually holds. What are you really protecting? What can you afford to lose?

Split workloads into two buckets. Stateless and stateful. Stateless is the easy side: API servers, frontends, batch processors. Spin up new instances in the other cloud, point traffic there. Done in minutes if automation is ready.

Stateful is where the complexity lives. Databases. Message queues. Session stores. Anything that remembers something. That’s the hard part — and it deserves to be treated like it.

The RTO and RPO Framework

But what is RTO? In essence, it’s how long you can afford to be down. But it’s much more than that — it’s the number that determines almost every architectural decision you’ll make. RPO is Recovery Point Objective: how much data you can lose, measured in time.

Sub-one-hour RTO on stateful workloads? That’s genuinely difficult. You need streaming replication with near-zero lag. That costs money and adds operational complexity. Say it plainly. This is hard. Don’t pretend it isn’t.

Four-hour RTO with 30-minute data loss tolerance? Now you have options. Scheduled snapshots. Async replication. Simpler runbooks. Cheaper tooling. Breathe.

For each service, ask three questions:

- How long can it be down before users notice or complain?

- How much data loss breaks the service versus just annoying people?

- If failover is broken, do you roll back or push forward?

Your payment service? Sub-hour RTO, near-zero RPO. Your analytics dashboard? Probably four-hour RTO, two-hour RPO. Your internal wiki? Honestly, a day. Maybe two. Don’t over-engineer it.

Build a one-page table. Service name, RTO, RPO, failover mechanism, owner. That table becomes your architecture. Everything flows from those numbers — not from what sounds impressive in a design doc.

Setting Up Cross-Cloud Data Replication That Actually Holds

There are exactly two approaches: managed replication tools and database-native replication. Each has trade-offs worth seeing clearly before you commit.

Managed Replication Tools

AWS DMS replicates to GCP CloudSQL. Rclone syncs object storage. Kafka Connect streams events across clouds. These tools handle the messy bits — schema drift, constraint violations, partial failures — so you don’t have to.

AWS DMS starts around $0.25 per hour on a dms.t3.small instance. That’s roughly $180 per month for a minimal setup. Full production configurations run $2,000 to $5,000 monthly. You’re paying for not writing replication logic yourself. Whether that’s worth it depends on your team size and your Tuesday nights.

Lag usually stays under five minutes if you size the instance properly. But here’s the catch: heavy write loads can push DMS behind without obvious warnings. You don’t notice until failover reveals you’ve lost 47 minutes of transactions. Monitoring replication lag isn’t optional — at least if you care about your RPO meaning anything.

For object storage, rclone is open-source and costs nothing beyond compute. It’s not magical. Scheduled syncs. Good for data lakes. Useless for real-time consistency requirements.

Database-Native Replication

PostgreSQL can replicate to PostgreSQL. MySQL to MySQL. Built-in. Battle-tested. Gives you tighter control than any managed service will.

The trade-off is real ownership. You’re monitoring lag yourself. Handling replication errors yourself. Promoting replicas during failover yourself. That’s more operational surface — at least if your team is small.

Synchronous replication can get lag under one second. No extra licensing. Just compute cost for the replica instance. That math often works in native replication’s favor for teams with database expertise already on staff.

The fiddly part: PostgreSQL from AWS to GCP means network peering, security group rules, and BGP routing between clouds. Not complicated exactly, but detailed. Expect a full day to get it right the first time.

Infrastructure Parity with Terraform

Probably should have opened with this section, honestly.

Whatever data layer you pick, your infrastructure needs to be identical across both clouds. Same database version — say, PostgreSQL 15.4, not “latest.” Same table schemas. Same indexes. Same extensions. That sounds obvious. It never actually is in practice.

Use Terraform with cloud-agnostic providers to define your database layer once and deploy to both AWS and GCP. Your root module takes a cloud_provider variable and outputs the connection string. Run terraform apply -var cloud_provider=aws, then again with cloud_provider=gcp. Same definition, two environments.

Version control your Terraform. When schemas change, deploy to standby first, test it, then deploy to primary. That’s the only safe order. I’m apparently paranoid about this — and version-controlled Terraform works for me while ad-hoc schema changes never do.

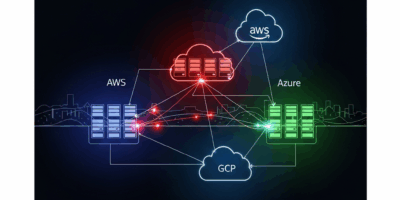

Failover Routing and How to Avoid a DNS Nightmare

Data is replicated. Infrastructure is identical. Now you need traffic to actually move when primary fails — and that’s where DNS bites people.

Use a cloud-agnostic DNS layer. Cloudflare. Route 53. Google Cloud DNS. Anything that doesn’t live inside your primary cloud. If your DNS is in the same AWS region that just went dark, you have a problem.

Health Checks That Actually Work

Create a dedicated health check endpoint in each cloud. Not a load balancer ping — an actual HTTP endpoint that returns 200 only when the service is healthy, the database is reachable, and replication lag is inside your RPO threshold.

Keep it simple. One endpoint. Check database connectivity. Check replication lag. Return something like:

{

"status": "healthy",

"lag_seconds": 12,

"timestamp": "2025-01-15T14:22:00Z"

}Configure DNS health checks to hit this endpoint every 30 seconds from multiple regions. Two consecutive failures trigger failover to the standby cloud. Setup takes under five minutes on Cloudflare. Route 53 runs maybe 15. That’s what makes health check configuration so satisfying to us infrastructure folks — it’s one of the few DR tasks that’s genuinely fast.

The TTL Gotcha

Set DNS TTL to 60 seconds maximum. Not 300. Not 600. Sixty.

When primary dies, clients still have the old IP cached until their TTL expires. A one-hour TTL means clients hammer a dead IP for up to 60 minutes before re-querying DNS. At 60 seconds, that window shrinks to just that — 60 seconds.

Yes, clients query DNS more often. Latency increases slightly. But failover completes in under two minutes instead of an hour. That trade-off isn’t even close.

One Concrete Scenario

Your primary AWS region in us-east-1 goes completely offline. That was 11:02 p.m.

AWS health check fails. Cloudflare catches it at 23:02. By 23:03, new DNS queries resolve to your GCP instance in us-central1. Existing clients wait for their cached TTL — maximum 60 seconds — then follow.

By 23:04, most traffic hits GCP. Database is synchronized to within five minutes of primary — DMS was running on a five-minute cycle, so you’ve lost at most that window of data. PagerDuty fires. On-call engineer promotes the replica, confirms AWS replication has stopped, and GCP is now the primary. Running on GCP only by 23:07.

Five minutes of recovery because the health check was tested, the TTL was set right, and replication was already running. So, without further ado, here’s how you make sure that’s your story too.

Testing Your DR Plan Without Destroying Production

This is the section most disaster recovery articles skip. It’s also the only section that matters — at least if you want your DR plan to be operational rather than theoretical. There’s a meaningful difference between those two things, and you only discover it during an outage.

Chaos-Lite Testing

Start small. Staging environment — exact replica of production infrastructure, just smaller, maybe a db.t3.medium instead of a db.r6g.2xlarge — simulate primary going down.

Kill the AWS health check endpoint. Watch DNS failover to GCP. Verify traffic moves. Verify the app starts. Verify it reads its data correctly. Verify the IAM permissions actually work end-to-end. Do this monthly. It takes maybe 30 minutes.

Then escalate. Promote the replica manually. Pause replication for 10 minutes before failover to simulate real lag. See what breaks. You’ll find things. Fix them before they find you at midnight.

Every quarter, run a full orchestrated failover. Set a date two weeks out. Tell the whole team. Fail production over to the standby cloud completely. Run there for two hours. Fail back. Document every single thing that went wrong — not to assign blame, but because that list is your roadmap.

A Simple Quarterly Checklist

- Week 1: Health check failover test in staging. Verify DNS switches. Verify traffic moves. 30 minutes.

- Week 6: Replica promotion test. Simulate primary database going dark. Promote standby. Verify data consistency against a known checkpoint.

- Week 11: Full orchestrated failover. Two hours running production on standby cloud. Full team involved. Document every issue that surfaces.

- Week 13: Post-incident review. Fix everything that broke. Update runbooks. Update Terraform. Update the one-page table.

Four tests per year. Thirty minutes each for the small ones. Two hours for the big quarterly run.

After three complete cycles, something shifts. Your DR plan isn’t theoretical anymore — it’s proven. On real infrastructure. Multiple times. That confidence is not something you can buy from a vendor or fake with a diagram.

Teams that skip testing find out their plan doesn’t work at 2 a.m. while customers are screaming. That’s the worst classroom there is. Test it now, on your schedule, when nothing is on fire. That’s the entire point.

Stay in the loop

Get the latest multicloud hosting updates delivered to your inbox.