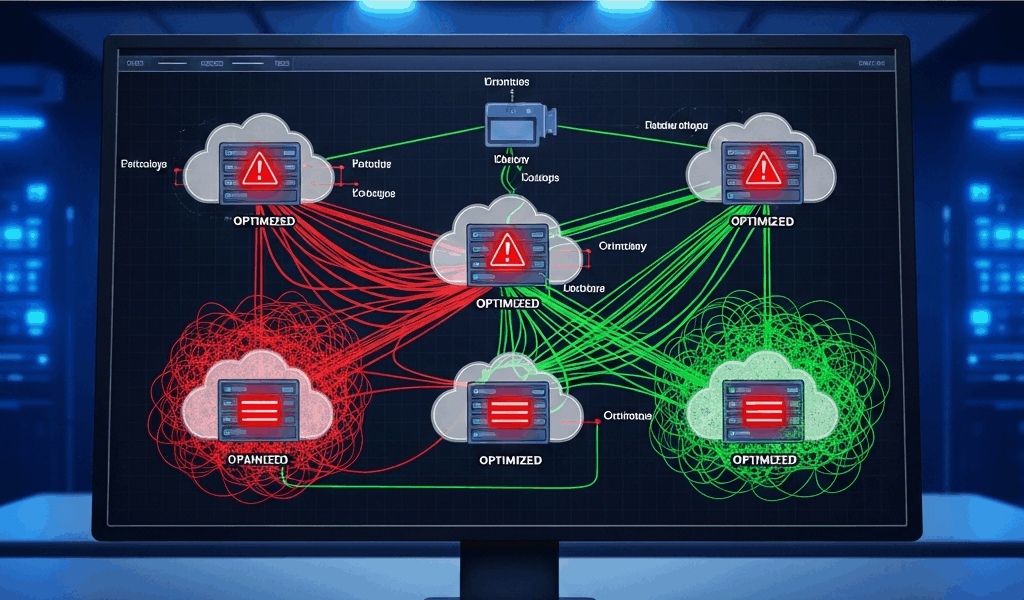

Why Multi-Cloud Networking Creates Latency Problems

Multi-cloud networking has gotten complicated with all the “just connect your VPCs” advice flying around. As someone who has watched teams spend months building beautifully distributed architectures only to get demolished by their first load test, I learned everything there is to know about what actually breaks when you wire AWS, Azure, and GCP together without a real plan. Today, I will share it all with you.

The first trap is embarrassingly simple. By default, traffic between clouds routes over the public internet. Your packets leave one provider’s network, bounce across multiple ISP hops, hit congestion points you have zero control over, and arrive slower than if everything lived in a single region. I’ve personally seen p95 latency jump from 8ms to 120ms the moment traffic crossed public internet boundaries. That was on a Tuesday afternoon. Took us until Thursday to admit what the actual problem was.

The second problem sneaks up on you. Most teams pick regions based on where users live or where compute was cheapest — without ever asking which regions actually interconnect efficiently. AWS us-east-1 doesn’t share the same physical interconnection agreements with Azure’s East US 2 as it does with GCP’s us-central1. You’ll discover this after go-live. Always. Without exception.

DNS resolution delays compound everything. When your application queries a service DNS name resolving to an IP in a different cloud, your DNS server might be geographically miles from the actual service. Some teams configure authoritative DNS in one region and query it from another, casually adding 20–80ms per lookup. Multiply that across thousands of requests and you’ve quietly built a performance tax into every single transaction your users touch.

MTU misalignment between clouds is the silent killer — honestly one of the nastiest issues to debug. AWS defaults to 1500-byte MTU. Azure sometimes uses 1400. GCP Cloud Interconnect negotiates down to match. When packets sized for one cloud pass through another, fragmentation happens. Fragmentation means retransmits. Retransmits mean latency spikes that look completely random until you run a packet capture and see exactly what’s happening at the wire level.

Probably should have opened with this section, honestly — most engineers don’t troubleshoot latency until customers are already complaining about timeouts. By then you’re under pressure and debugging turns into firefighting instead of methodical planning. Don’t make my mistake.

The Connectivity Options Worth Actually Using

AWS Transit Gateway — Best for AWS-centric environments

AWS Transit Gateway might be the best starting point, as multi-cloud routing requires a centralized hub. That is because Transit Gateway terminates VPC peering connections and gives you one place to manage route tables instead of juggling a web of individual peering relationships. Setup involves creating the gateway, attaching your VPCs, and configuring routes to funnel traffic through it.

The latency benefit is real but bounded. AWS-to-AWS connections stay on AWS’s private backbone — solid. AWS-to-Azure or AWS-to-GCP traffic still has to cross to those providers somehow. You’d add AWS Direct Connect — a dedicated 1Gbps or 10Gbps circuit — to link your on-premises network or partner clouds. That runs $0.30/hour for a 1Gbps port plus data transfer costs on top. Worth it if you’re moving terabytes monthly. Not worth it for occasional API calls across clouds.

Azure Virtual WAN — Best for hybrid or Azure-first multi-cloud

But what is Azure Virtual WAN? In essence, it’s Azure’s answer to Transit Gateway, but with built-in support for branch offices, on-premises sites, and partner cloud providers baked in from day one. But it’s much more than that — you get automatic routing between VNets, better BGP control than basic peering, and native ExpressRoute integration without bolting on extra services.

That’s what makes Virtual WAN endearing to us Azure-first teams. One hub terminates connections from multiple VNets, on-premises sites, and ExpressRoute circuits without manually configuring each peering relationship. Traffic between Azure resources never leaves Azure’s backbone. Multi-cloud traffic still traverses the internet unless you’re paying for ExpressRoute through a peering partner like Megaport — which we’ll get to.

GCP Cloud Interconnect — Lowest latency for Google-first stacks

GCP Cloud Interconnect provides dedicated, private connectivity between GCP and your on-premises environment or partner clouds. Unlike AWS Direct Connect, Interconnect connections come in 10Gbps or 100Gbps flavors — no small pipes. Costs run around $0.30/hour for a 10Gbps connection in most regions, plus egress fees. The latency improvement is dramatic — typically 1–3ms between interconnected networks versus 40–100ms over the internet.

The catch: Interconnect requires physical presence in one of Google’s partner facilities. Equinix, Megaport, Colt — you need to be colocated or partnered with someone who is. If you can’t reach a partner facility, Cloud Interconnect simply isn’t an option for you. Full stop.

Megaport or Equinix Fabric — Best for multi-cloud without vendor lock-in

I’m apparently a Megaport person, and it works for me while direct provider connections never quite covered all my clouds cleanly. So, without further ado, let’s dive in. Megaport and Equinix Fabric let you create software-defined connections between any combination of clouds from a single platform — no separate negotiation with each provider, no separate contracts to juggle.

A typical setup: $500/month for a 10Mbps virtual cross-connect between Equinix’s London facility and AWS eu-west-2, plus another $500/month connecting to Azure’s London peering point. Latency is deterministic — 2–5ms between clouds routed through the same facility. The trade-off is paying for multiple connections. Connecting four clouds means potentially four cross-connects. That math adds up fast.

Common Misconfigurations That Tank Performance

Routing all traffic through a single region unnecessarily

Frustrated by unpredictable latency, one team I worked with had built services across AWS us-west-2, Azure East US, and GCP us-central1 — but routed everything through an AWS Transit Gateway sitting in us-east-1, using a whiteboard diagram that made sense in a conference room but not in production. Every request made a triangle: West Coast to East Coast, then back out to the actual destination service. Latency hit 180ms for what should have been a 20ms call. That new routing decision took off several months earlier and eventually evolved into the performance disaster engineers know and dread today.

The symptom: response times that don’t budge even after you’ve upgraded instances or thrown caching at the problem. Consistent high latency regardless of time of day. The fix is building regional hubs or switching to active-active routing where traffic takes the shortest available path.

Skipping BGP tuning and using static routes everywhere

BGP — Border Gateway Protocol — is how clouds and on-premises networks tell each other about available routes. Most teams ignore it completely. They configure static routes in Transit Gateway or Virtual WAN, assume everything works, and move on. The problem surfaces when a link dies. Static routes don’t fail over. Traffic black-holes and stays there until someone manually edits a route table at 2am.

What you’ll observe: intermittent connectivity loss that looks completely random. You reboot something, traffic recovers, then fails again three hours later. BGP detects dead links and reroutes automatically. Configuring it takes maybe 30 extra minutes. It saves you multiple 3am pages. Not a hard trade-off.

Ignoring MTU mismatch between providers

I debugged a latency issue for eight hours — eight — because Azure’s application gateway was configured at 1400-byte MTU while AWS instances sat at 1500. Every large request got fragmented. TCP retransmits kicked in. Latency went from 8ms to 250ms for roughly 2% of requests. Packet captures eventually showed the retransmissions, which pointed directly to the MTU mismatch. Eight hours for a one-line config change.

The symptom: random high-latency outliers. Not every request is slow — just enough to blow your SLOs. TCP retransmit counts will spike in netstat output if you know to look. Fix it by aligning MTU across all clouds, usually to 1400 to be safe across providers.

Using public IPs where private peering is available

While you won’t need to audit every single packet flow immediately, you will need a handful of minutes reviewing whether your inter-cloud traffic is NATting to public IPs when it doesn’t have to. Some teams do this thinking it’s the only available path. It works. It’s also slower and exposes production traffic to DDoS in ways that private peering simply doesn’t.

You’ll notice egress costs running higher than expected, latency above baseline, and security reviewers asking uncomfortable questions about why production traffic is using public IPs at all.

Skipping DNS failover and relying on a single authoritative nameserver

First, you should check where your authoritative DNS actually lives — at least if you have services querying across clouds. If that server sits in AWS and your Azure app queries it, every DNS lookup includes a cross-cloud round trip. Cache the results aggressively, sure. But DNS queries still fail entirely if that one nameserver goes down. That’s not a resilience strategy, that’s a timer counting down to an outage.

The symptom: DNS lookup times of 50–100ms instead of 1–5ms. Health checks fail mysteriously. Failover takes forever because DNS propagation is slow and your TTLs were set to 3600 because someone picked a default and never changed it.

How to Test and Measure Latency Across Clouds

Establish a baseline before you go live

Use iPerf3 to measure throughput and UDP latency between cloud regions. Deploy iPerf servers in each cloud, run client tests from a central location, and write down the results — actually write them down:

- AWS us-west-2 to Azure East US: 45ms latency, 800Mbps throughput over internet

- AWS us-west-2 to GCP us-central1: 32ms latency, 950Mbps throughput

- Azure to GCP: 110ms latency, 400Mbps (that gap indicates routing inefficiency worth investigating)

These numbers become your SLO anchor. Anything worse than baseline after deployment is a signal, not a mystery — it’s a misconfiguration waiting to be found.

Use traceroute and ping for real-world paths

Ping gives you round-trip time but hides where delays actually occur. Traceroute shows every hop. Run traceroute from your application server in Cloud A to a service IP in Cloud B. Count the hops. More than 15–20 usually means suboptimal routing — traffic bouncing through regions it has no business visiting.

Compare traceroute output before and after config changes. If a clean 8-hop path becomes a 24-hop path after a routing update, something broke and you now have the evidence to prove it.

AWS Reachability Analyzer for simplified troubleshooting

AWS Reachability Analyzer tests connectivity between EC2 instances, load balancers, and endpoints without sending actual production traffic. It reports reachability status and surfaces blockers like overly restrictive security groups or NACLs. Not a latency tool — but invaluable for confirming connectivity exists at all before you start worrying about optimizing performance.

GCP Network Intelligence Center for path visualization

GCP Network Intelligence Center shows you the actual path your traffic takes through Google’s network, flags misconfigurations, and suggests optimizations it can identify automatically. Run a connectivity test between two services and get back the exact path, observed packet loss, and latency numbers. It’s genuinely useful and most teams don’t know it exists.

Cloud-native monitoring for ongoing validation

After baselines are documented, set up continuous monitoring. CloudWatch alarms for latency percentiles, BGP route changes, and packet loss. Alert if p95 latency exceeds your documented baseline by more than 20%. Test every 5 minutes, not daily — daily monitoring catches problems after customers already have.

A Practical Checklist Before You Go Live

- Confirm private connectivity — VPC/VNet/Network peering configured, no public IP routing for inter-cloud traffic, Direct Connect or Interconnect provisioned if applicable

- Verify BGP routes — BGP neighbors up, route advertisements confirmed, failover tested by actually killing one link and watching automatic rerouting happen

- Align MTU across all clouds — Check AWS VPC MTU, Azure subnet MTU, GCP network MTU; set everything to the lowest common denominator, usually 1400

- Document latency baseline — iPerf and traceroute results recorded for each cloud-pair, p50/p95 latency thresholds defined and written somewhere findable

- Enable monitoring and alerts — CloudWatch, Azure Monitor, or GCP Operations configured to track latency, packet loss, and BGP state; alerts set for deviations from your documented baseline

- Test DNS resolution — Query times from each cloud to your authoritative nameserver measured and recorded; secondary and tertiary nameservers configured

- Security group and firewall review — All necessary ports open, no unintended blocking, logging enabled so misconfigurations leave evidence

Print this list. Share it with your team. Check every item before production traffic crosses cloud boundaries. The 90 minutes spent here prevents the 8-hour debugging session — the one where customers are already filing tickets and your Slack is a disaster. I know because I’ve lived both versions of that story, and one of them is considerably worse than the other.

Stay in the loop

Get the latest multicloud hosting updates delivered to your inbox.