Why Data Transfer Bills Catch Teams Off Guard

Multi-cloud billing has gotten complicated with all the pricing noise flying around — and nowhere is that truer than with data transfer costs. Most engineering teams pour serious energy into optimizing compute and storage during cloud planning. They sweat over instance sizes. They negotiate reserved capacity discounts. They build clever storage tiering strategies. Then the bill lands and there’s a $40,000 egress charge sitting there like it owns the place.

As someone who’s spent years working across cloud infrastructure projects, I learned everything there is to know about this particular budget ambush. Today, I will share it all with you.

But what is a data transfer fee? In essence, it’s a charge your cloud provider collects every time data leaves their network — crossing regions, jumping to another provider, or hitting the public internet. But it’s much more than that. It’s a structural feature of how cloud billing works, and it compounds in ways most teams never model ahead of time. AWS charges $0.09 per GB leaving US East to the public internet. Google Cloud charges $0.12 per GB for the same scenario out of us-central1. Azure sits at $0.08 per GB from East US. Small numbers. Until you’re moving terabytes every single day.

The real problem isn’t the per-gigabyte rate. These fees hide behind vague billing category names like “DataTransfer” or “Egress.” They don’t show up in pricing calculators with the same prominence as compute costs. Sales teams certainly don’t highlight them during initial pitches. Teams discover them retroactively — usually when the monthly bill doubles and nobody can explain the delta.

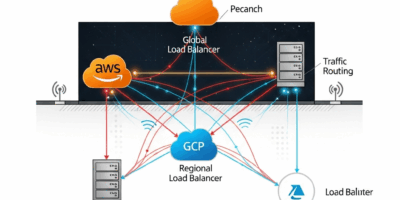

Multi-cloud architectures make everything worse here. Operating across AWS, GCP, and Azure simultaneously means you’re not paying one provider’s egress fees. You’re paying fees on every data handoff between platforms. A dataset moving from S3 to Cloud Storage incurs AWS egress charges and GCP ingestion costs simultaneously. Replicate that across regions in a multi-cloud setup and fees compound in ways single-provider shops never experience.

I learned this the expensive way. A project I managed moved analytics data between AWS and BigQuery on an hourly schedule. S3 costs were fine. BigQuery compute costs were fine. But nobody budgeted for the transfer layer. Three months in, that single pipeline was running $8,000 monthly — just in egress. Nearly triple what we spent on actual data processing. Don’t make my mistake.

The Three Transfer Scenarios That Cost the Most

Cloud-to-Cloud Egress Between Providers

This is the scenario that blindsided my team. Exporting data from AWS S3 to a GCP BigQuery instance means AWS charges you for every gigabyte leaving their network — $0.09 per GB for US regions at the highest egress tier. For 100 GB daily, that’s $270 monthly just to get data out the door. Before touching anything else.

GCP’s pricing looks cheaper at first glance. But ingestion into BigQuery from external sources runs $0.05 per GB, separate from whatever egress the source already charged. Combined with AWS egress, a single AWS-to-GCP pipeline can hit $0.14 per GB moved. Azure sits in the middle at $0.08 egress, but regional surcharges push certain routes higher than the headline rate suggests.

What nobody tells you upfront: these costs don’t scale linearly with volume — they scale with your ambitions. A 5 GB weekly export costs pocket change. But when teams discover multi-cloud architectures actually work and start moving 500 GB daily between providers, the math becomes $45,000 monthly in pure transfer costs. Before compute. Before storage. Before anything else that shows up on the bill.

Cross-Region Replication Within Multi-Cloud

Probably should have opened with this section, honestly. Replicating data across regions within a single provider is cheaper than inter-cloud transfer — but it adds up fast. AWS charges $0.02 per GB to move data between US regions, $0.04 between continents. A multi-cloud strategy distributing workloads across AWS us-east, GCP us-central, and Azure eastus means replicating datasets across all three platforms for redundancy and latency reasons.

That’s three egress operations plus three ingestion operations per dataset per replication cycle. Replicating 50 GB hourly across all three providers runs roughly $1,440 monthly on cross-region movement alone — and that assumes you’re not simultaneously doing cross-cloud transfers on top of it. Engineers often architect for availability without calculating what data redundancy actually costs at scale. That’s the gap that shows up on month three invoices.

Data Pulling Back to On-Premises or User Endpoints

This one catches teams off guard months into production — not at launch, but later, when usage patterns mature. A hybrid architecture syncing data from cloud back to on-prem storage, or to user laptops for offline access, generates outbound transfer fees just like cloud-to-cloud movement does.

AWS egress to on-prem sits at $0.09 per GB. GCP’s comparable fee is $0.12. Syncing a 200 GB database backup to on-prem weekly runs $7,200 monthly just for pulling data home. And if users are downloading reports directly from cloud storage — say a team of 50 people pulling 500 MB files weekly — that’s $10,800 in monthly egress from AWS alone. Nobody models this during initial architecture reviews. It’s just users downloading stuff, right? Except at cloud scale, users downloading stuff has a very specific price tag.

How to Find Where Your Transfer Costs Are Coming From

AWS Cost Explorer Method

Open AWS Cost Explorer. Filter by Service, select EC2. Then filter by Usage Type and search for “DataTransfer.” Breakdowns appear: DataTransfer-Regional, DataTransfer-Out-Bytes, DataTransfer-CloudFront. The line that matters for multi-cloud costs is usually DataTransfer-Out-Bytes from your specific region.

Export to CSV. Sort by cost descending. You’ll immediately see which regions, services, and days generated the biggest egress charges. Look for patterns. DataTransfer spiking every Tuesday at 2 AM? That’s probably an automated backup or replication job nobody documented. That’s where you start cutting.

GCP Billing Export Approach

GCP’s native billing dashboard is genuinely vague about transfer costs. Export billing data to BigQuery instead — it’s where the real detail lives. Run this query:

SELECT service.description, sku.description, SUM(usage.amount) as total_usage, SUM(cost) as total_cost FROM `project.dataset.gcp_billing_export_v1_XXXXXX` WHERE sku.description LIKE '%Data Transfer%' GROUP BY service.description, sku.description ORDER BY total_cost DESC

That surfaces exactly which services generated egress charges and what they cost. You’ll see line items like “BigQuery Data Transfer” and “Compute Engine Network Egress” broken out by day. Much more useful than anything the dashboard shows you directly.

Azure Cost Analysis

Azure Cost Analysis offers Meter Category filtering — navigate to Cost Analysis inside your subscription, then filter Meter Category to “Bandwidth” or “Data Transfer.” Group by Resource Group afterward to see which specific workloads are generating egress charges. Azure’s interface is honestly cleaner than AWS for this particular analysis. Small consolation given the bills, but worth noting.

Third-Party Cross-Cloud Visibility

Native tools don’t show you the complete picture if you’re actually operating multi-cloud. Spot.io’s cost intelligence platform or CloudHealth connects to all three providers and shows aggregate transfer costs across your entire infrastructure. Free tiers cover basic reporting. Paid versions add cost forecasting and optimization recommendations specific to data transfer patterns — not just overall cloud spend.

Fixes That Actually Reduce Your Egress Bill

Private Interconnects — The Expensive Fix That Works

AWS Direct Connect and Google Cloud Interconnect are private network connections between your cloud infrastructure and on-prem facilities. Direct Connect runs $0.30 per hour for a 10 Gbps connection plus $0.02 per GB transferred. Sounds expensive. Compare it to egress fees at real volume.

Moving 1 TB daily between AWS and on-prem via public internet costs $2,700 monthly in egress alone. That same traffic over a 10 Gbps Direct Connect runs roughly $216 in hourly fees plus $600 in transfer — $816 total. The direct connection breaks even in month one and saves $1,884 monthly after that. The math is not subtle.

This is an architectural change, not a quick win. Network engineering work is involved, and physical connectivity installation takes time. But for high-volume, ongoing data movement — the kind that shows up as multi-thousand-dollar line items — it’s the most cost-effective structural solution available.

Co-location — The Quick Win

Place workloads that communicate frequently in the same region and provider. Running Kafka in AWS us-east with consumers in GCP us-central means every message crossing between them incurs egress fees. Move the consumers to AWS us-east and that traffic becomes free regional replication. Or move both workloads to the same provider entirely.

No new infrastructure required. It’s a deployment change. Map data dependencies before provisioning — identify which services transfer large amounts of data between each other, then collocate those services. This single architectural decision can cut egress costs by 60-70% without touching infrastructure spend. That’s probably the highest-leverage optimization on this list.

Data Compression and Caching

Compress before transfer. Gzip typically achieves 60-80% reduction on JSON and text data. Parquet handles columnar data even better. A 500 GB daily transfer compressed to 200 GB saves $27,000 monthly at AWS egress rates — same data, smaller bill, minor application code change. Caching frequently accessed data at CDN endpoints or in regional caches reduces how often you pull from origin storage at all.

I’m apparently a gzip evangelist at this point, and it works for me while arguing against compression never does. The implementation effort is low. The savings are not.

What to Set Up Before Your Next Cloud Deployment

Map data flows between all services before you provision anything. Draw diagrams showing where data originates, where it’s processed, where it lands. Estimate transfer volumes for each connection. Run those volumes against provider egress rates to calculate transfer costs alongside compute and storage — not after, alongside. Tag all workloads generating or consuming large data volumes with a “transfer-heavy” tag from day one.

Configure billing alerts specifically on DataTransfer line items — not just overall cloud spend. Set the threshold at 20% above your baseline. When it triggers, you catch unexpected transfer patterns before they burn through a full billing cycle. That’s the difference between a $1,200 surprise and a $40,000 one.

Review each provider’s free egress tiers before finalizing architecture decisions. AWS gives 1 GB monthly free per service in most regions. GCP doesn’t charge egress to certain Google services. Azure offers free regional transfer under specific conditions. Know these limits for your specific setup — they’re real money at small-to-medium scales.

One thing to do today: open your cloud billing dashboard right now. Find the DataTransfer line item. See what it cost last month. If you can’t locate it in three minutes, that’s exactly why bills keep surprising you. Spend fifteen minutes learning where that number lives in your provider’s interface. That fifteen minutes is worth more than any optimization framework — because you can’t fix what you can’t find.

Stay in the loop

Get the latest multicloud hosting updates delivered to your inbox.