AWS vs Azure vs GCP — Which Cloud Actually Saves You Money in 2026

Cloud cost comparisons have gotten complicated with all the marketing noise flying around. As someone who survived three startup migrations and two full enterprise overhauls, I learned everything there is to know about what cloud bills actually look like in production. Today, I will share it all with you.

Fair warning — I got it badly wrong the first time. Assumed AWS was the obvious default because everybody used it. Signed a three-year Reserved Instance contract on a compute family that got deprecated eight months in. Watched roughly $40,000 disappear. That was 2022. Since then I’ve become genuinely obsessed with real billing data — not the marketing pages, not the “contact sales” enterprise tiers. The actual invoices. The egress line items that appear like a charge from a restaurant you didn’t know was billing you for bread.

Most cloud comparisons refuse to run real math on equivalent workloads. This one does. Monthly bills for real workload shapes, not per-hour sticker prices sitting in a vacuum. The answer shifts dramatically depending on what you’re actually running — and pretending there’s one universal winner in 2026 is exactly how engineering teams end up locked into contracts that don’t fit them.

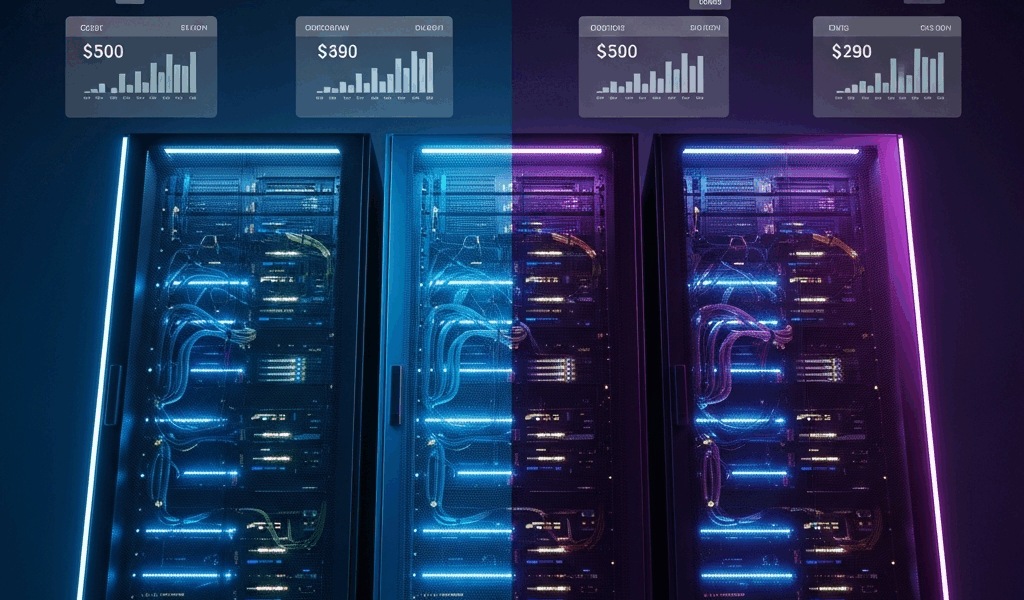

Compute Cost Per Hour — Real Numbers Side by Side

Start with the baseline. Equivalent general-purpose compute across all three providers, on-demand Linux pricing in US East regions, early 2026 rates. So, without further ado, let’s dive in.

General Purpose — 8 vCPU, 32 GB RAM

But what is the “workhorse tier”? In essence, it’s the instance class powering most web applications and API services. But it’s much more than that — it’s also the tier where small percentage differences compound into serious annual savings. The instances here are AWS m7i.2xlarge, Azure D8s v5, and GCP n2-standard-8. Reasonably close architectural equivalents — not perfect, but as close as the market offers right now.

- AWS m7i.2xlarge — $0.4032/hr on-demand | $0.2479/hr 1-year Reserved (no upfront) | ~$0.121/hr Spot (variable)

- Azure D8s v5 — $0.384/hr on-demand | $0.238/hr 1-year Reserved | ~$0.144/hr Spot

- GCP n2-standard-8 — $0.3820/hr on-demand | $0.2294/hr 1-year Committed Use | ~$0.1146/hr Spot (Preemptible)

GCP runs 5 to 10 percent cheaper on raw compute. Not enormous in isolation. Run 50 instances for a year, though, and you’re looking at roughly $18,000 to $22,000 in savings before touching anything else. GCP also applies Sustained Use Discounts automatically — run an instance past 25 percent of a month and you get a discount without committing to a thing. That single policy difference matters a lot for workloads that aren’t perfectly predictable.

Memory-Optimized — 16 vCPU, 128 GB RAM

Database hosts, in-memory caches, analytics engines — they all live here. Comparing AWS r7i.4xlarge, Azure E16s v5, and GCP m3-standard-16.

- AWS r7i.4xlarge — $1.0080/hr on-demand | $0.6199/hr 1-year Reserved

- Azure E16s v5 — $1.008/hr on-demand | $0.6249/hr 1-year Reserved

- GCP m3-standard-16 — $0.9424/hr on-demand | $0.5654/hr 1-year Committed Use

GCP’s advantage holds here and actually widens slightly at the reserved and committed tier. AWS and Azure are nearly identical on memory-optimized pricing — a pattern that repeats across most instance families. They’ve essentially matched each other, leaving GCP as the consistent underdog on raw compute numbers. That’s what makes GCP’s pricing model endearing to us cost-obsessed infrastructure people.

Spot and Preemptible Pricing — The Wildcard

Spot pricing is where things get genuinely unpredictable. AWS Spot can save you 70 to 90 percent off on-demand, but interruption rates vary dramatically by instance family and availability zone. I’m apparently unlucky with capacity crunches — I’ve run batch ML workloads on AWS Spot with zero interruptions for six weeks, then watched a job die three times in one afternoon. GCP Preemptible instances have a hard 24-hour maximum runtime. Real constraint for long-running jobs. Azure Spot sits somewhere in between — lower interruption rates in my experience, but the pricing discount is often smaller.

For fault-tolerant workloads — batch processing, model training, rendering — Spot pricing can make AWS the cheapest option despite higher on-demand rates. For anything requiring stability, the calculus flips back toward GCP’s committed use model.

The Hidden Cost — Data Egress Kills Your Budget

Probably should have opened with this section, honestly. Compute pricing is a red herring for any application that moves data — and most applications move a lot of data. Egress fees are where cloud providers quietly recover margin, and the differences between AWS, Azure, and GCP are dramatic enough to flip the entire cost comparison on its head.

Standard Egress Pricing — Internet Traffic

- AWS — First 10 TB/month: $0.09/GB | Next 40 TB: $0.085/GB | Next 100 TB: $0.07/GB

- Azure — First 10 TB/month: $0.087/GB | Next 40 TB: $0.083/GB | Next 100 TB: $0.07/GB

- GCP — First 1 TB/month: $0.12/GB | Next 9 TB: $0.11/GB | 10 TB+: $0.08/GB

GCP’s egress pricing is actually higher on small volumes — surprises most people who assume GCP wins everywhere. The break-even point shifts around 10 TB per month. But here’s what none of these numbers capture: GCP runs a free egress program for traffic going to Cloudflare, Fastly, and several other CDN partners. If your architecture routes GCP compute behind a CDN — and most SaaS products should — your effective egress cost can drop to near zero for the majority of your traffic. That changes everything.

What a Real SaaS Monthly Bill Looks Like

Burned by abstractions too many times, I now run these comparisons on actual workload profiles. Here’s a mid-size SaaS scenario: 20 application servers at 4 vCPU/16 GB RAM, running 24/7, serving 50 TB of data per month, with 5 TB of inter-region replication.

AWS — Monthly Estimate

- 20x m7i.xlarge, 1-year Reserved: ~$2,380/month

- 50 TB egress to internet: ~$4,250/month

- 5 TB inter-region transfer: ~$500/month

- Total: ~$7,130/month

Azure — Monthly Estimate

- 20x D4s v5, 1-year Reserved: ~$2,280/month

- 50 TB egress to internet: ~$4,150/month

- 5 TB inter-region transfer: ~$500/month

- Total: ~$6,930/month

GCP — Monthly Estimate (with CDN partner routing, 80% of traffic)

- 20x n2-standard-4, 1-year Committed Use: ~$2,190/month

- 10 TB egress to internet (remaining 20%): ~$800/month

- 5 TB inter-region transfer: ~$480/month

- Total: ~$3,470/month

That’s not a 5 to 10 percent difference anymore. GCP costs less than half of AWS for this specific workload. The catch — you need to architect around CDN-routed traffic, which not every application handles cleanly. But for consumer SaaS with static assets, APIs behind a CDN, and standard web serving patterns, it’s absolutely achievable.

Inter-Region and Cross-Zone Transfer

AWS charges $0.01/GB for cross-availability-zone traffic within a single region. Sounds trivial. Run a three-tier application with your database cluster in different AZs from your app servers — the correct high-availability design, by the way — and you’re moving tens of terabytes internally. I’ve personally seen AWS bills where cross-AZ transfer costs exceeded compute costs entirely on database-heavy applications. Don’t make my mistake. Azure and GCP charge similar cross-zone rates, so this isn’t a vendor differentiator as much as an architectural cost you need to account for everywhere.

AI and ML Workloads — Who Wins on GPU Pricing

This section looked completely different eighteen months ago. The GPU landscape shifted fast in 2025 and early 2026 — and the right answer depends heavily on what phase of the ML lifecycle you’re optimizing for. Training runs, inference serving, and fine-tuning each tell a different story.

GPU Instance Pricing — H100 and A100 Tier

Frustrated by months of trying to actually get H100 capacity on-demand, I eventually pieced together a real-world picture that’s messier than any pricing page suggests.

- AWS p5.48xlarge (8x H100 80GB SXM) — $98.32/hr on-demand | Reserved pricing not widely published, negotiate with your rep | Spot: rarely available, ~$60–75/hr when it surfaces

- Azure NC H100 v5 (8x H100 80GB) — $98.00/hr on-demand | Reserved 1-year: ~$63.70/hr | Spot: ~$29–40/hr with high interruption risk during peak periods

- GCP a3-highgpu-8g (8x H100 80GB) — $98.32/hr on-demand | 1-year Committed: ~$63.85/hr | Spot: ~$29–35/hr

At the H100 tier, all three providers have essentially converged on the same on-demand price. Differentiation comes from availability, committed pricing, and ecosystem tooling — not the sticker rates.

Azure’s OpenAI Advantage — Real But Specific

Azure has a structural advantage for teams building on OpenAI models. Azure OpenAI Service delivers GPT-4o, o3, and the rest of the model family through Microsoft’s infrastructure — private endpoints, no data leaving your Azure tenant, enterprise SLAs. The pricing mirrors OpenAI’s direct API pricing: $2.50 per million input tokens for GPT-4o as of early 2026. The compliance and data residency guarantees are meaningful for regulated industries, though. Healthcare and financial services companies already paying for Azure compute get consolidated billing and a cleaner data processing agreement story when they add Azure OpenAI Service.

That’s a real advantage. It’s just not a compute price advantage — it’s a procurement and compliance advantage dressed up as a technical one. Worth knowing the difference.

GCP TPUs — Cheaper Training at Scale

Training your own models rather than consuming API endpoints? GCP’s TPU v5e is genuinely worth a serious look. A v5e-256 pod — 256 chips — runs at approximately $2.40/chip/hour on-demand, or around $1.20/chip/hour on 1-year Committed Use. For large-scale training runs on transformer architectures, the kind where you’re burning thousands of chip-hours, TPUs can come in 30 to 40 percent cheaper than equivalent H100 configurations. Better memory bandwidth for the specific matrix multiplication patterns that dominate training is the reason.

The limitation is ecosystem friction. PyTorch support on TPUs has improved dramatically but isn’t seamless. Teams already running JAX workloads or willing to use TensorFlow have a much easier path. If your ML stack is PyTorch-native and you’re not planning to invest engineering time in XLA compilation tuning, the TPU cost advantage disappears into overhead fast.

AWS — Broadest GPU Selection, Best Inference Infrastructure

AWS wins on raw selection. Beyond standard H100 and A100 configurations, there’s Trainium2 for training, Inferentia2 designed specifically for inference serving, and a mature SageMaker ecosystem that handles MLOps scaffolding most teams would otherwise build from scratch. An inf2.48xlarge running Inferentia2 chips serves inference at roughly 40 to 60 percent lower cost per token than equivalent GPU-based inference for many popular model architectures — Llama 3, Mistral, and similar transformer models all have published Inferentia2 performance benchmarks that hold up in real production environments.

For inference at scale — serving a model to thousands of concurrent users — AWS’s custom silicon story is currently the strongest. For training large models from scratch with minimal ecosystem friction, the comparison gets closer. For consuming frontier model APIs with enterprise compliance requirements, Azure wins without much contest.

The Verdict — Match Your Workload to Your Cloud

There is no universal winner. Anyone telling you to just pick one cloud for everything is either oversimplifying or selling something. Here’s where I’d actually put money in 2026, workload by workload.

Consumer SaaS With High Egress Volume

Winner — GCP

Serving web traffic through a CDN partner means GCP’s free egress program can cut your data transfer costs by 60 to 80 percent compared to AWS or Azure. Combined with the 5 to 8 percent compute cost advantage and automatic Sustained Use Discounts, GCP wins clearly for this profile. The tradeoff is a smaller managed service ecosystem — if you need something exotic in your stack, AWS probably has a managed version of it and GCP might not.

Enterprise Software With Microsoft Stack Dependencies

Winner — Azure

Hybrid Benefit licensing for Windows Server and SQL Server is real money — not marketing. Windows-heavy workloads running under Azure Hybrid Benefit can see compute costs drop 40 percent compared to AWS or GCP running equivalent workloads without existing license agreements. Add the Azure OpenAI Service compliance story for regulated industries, existing Microsoft EA agreements, and Active Directory integration that’s native rather than bolted on — Azure frequently wins for enterprise shops already deep in the Microsoft ecosystem, despite not winning on raw compute pricing alone.

Batch Processing and Fault-Tolerant Workloads

Winner — AWS (via Spot)

AWS’s Spot instance market is the deepest and most liquid available. For batch jobs, data pipelines, rendering farms, and any workload that can checkpoint and restart — AWS Spot pricing is hard to beat when you can get it. The interruption rate runs higher than Azure Spot in my experience, but the discount is also larger and the instance variety is greater. Architect your batch system to handle interruptions gracefully and AWS Spot is likely your cheapest compute option regardless of what GCP’s on-demand numbers look like.

ML Training at Scale

Winner — GCP (JAX/TensorFlow) or AWS (PyTorch/Inferentia)

It splits on your stack. JAX or TensorFlow shops doing large-scale training runs should evaluate GCP TPUs seriously — unit economics at scale are compelling and the integrated tooling has matured considerably. PyTorch-native teams doing training runs will find H100 pricing comparable across all three providers, but AWS’s inference infrastructure on Inferentia2 creates a compelling end-to-end story from training through to serving. Azure makes sense here specifically if you’re building on top of OpenAI models and need enterprise compliance guarantees.

Multi-Region, Low-Latency Applications

Winner — AWS

AWS operates 33 geographic regions compared to GCP’s 40 and Azure’s 60-plus, but AWS’s inter-region network performance and global edge footprint through CloudFront and Global Accelerator remains the most mature option for latency-sensitive applications. Running a real-time gaming backend, live video infrastructure, or financial trading system where 50ms versus 80ms matters — AWS’s global network infrastructure has the deepest operational history and the most granular latency optimization tooling available.

One Final Number Worth Keeping in Mind

Across a representative portfolio of workloads — mixed web serving, databases, batch processing, light ML — teams that optimize cloud choice per workload type rather than standardizing on a single provider report 20 to 35 percent lower cloud bills in most internal benchmarks I’ve reviewed. Multi-cloud operations add management overhead, no question about that. But the cost differential at scale is real enough that for any team spending more than $50,000 per month on cloud infrastructure, a serious evaluation of workload-to-provider matching is worth every hour of engineering time it takes to model properly.

Run your own numbers. Pull actual egress volumes from your current provider’s billing dashboard — not estimates. Price in the committed use discounts you’d realistically qualify for based on your actual usage patterns. And check the egress line item on your current bill before assuming compute is your biggest cost driver. For most web-facing applications, it isn’t.

Stay in the loop

Get the latest multicloud hosting updates delivered to your inbox.