AWS vs Azure vs GCP — Where Each Cloud Actually Wins in 2026

Every year I rerun this comparison, and every year someone in the comments tells me I’m wrong. So let me be upfront: the AWS vs Azure vs GCP comparison for 2026 looks meaningfully different from even two years ago, and if you’re making infrastructure decisions based on 2023 articles, you’re probably overpaying or underprovisioning somewhere. I’ve spent the last eight years architecting production workloads across all three platforms — migrating a 400-node Hadoop cluster to GCP Dataproc, running enterprise SQL Server estates on Azure, and managing multi-region AWS deployments for fintech clients. I have opinions. They’re based on bills, not benchmarks.

This is not a vendor-neutral article. Vendor-neutral means useless. I’m going to name winners per category because that’s what you actually need when you’re sitting in front of a budget spreadsheet.

Compute — Winner by Workload Type

AWS wins on raw compute variety. Full stop. If you need a specific instance shape — high memory, high CPU, local NVMe, specific GPU — AWS almost certainly has it. As of early 2026, AWS offers over 750 instance types across its EC2 catalog. Azure has roughly 400. GCP sits around 200 but makes up for it with custom machine types, which is genuinely useful when your workload doesn’t fit a standard shape.

Windows Workloads — Azure Wins Clearly

Running Windows Server, SQL Server, or anything deeply tied to Active Directory? Azure. That’s not a close call. Azure Hybrid Benefit alone — which lets you bring existing Windows Server and SQL Server licenses to Azure — can cut your compute costs by 40 to 85 percent depending on license coverage. I’ve seen enterprises drop their monthly Azure bill from $180,000 to under $60,000 just by properly applying Hybrid Benefit licenses they already owned. AWS has a Bring Your Own License program too, but the tooling is clunkier and the savings are less predictable.

Azure’s tight integration with Microsoft Entra ID (formerly Azure AD), Intune, and Microsoft 365 also means your identity layer just works. No SAML federation gymnastics. No weird latency on Kerberos tokens. It works because it’s the same company.

General Linux Compute — AWS Wins

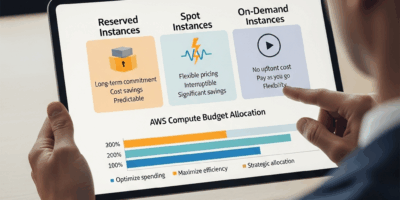

For everything else, AWS is still the default answer. The Graviton4 instances (C8g, M8g, R8g) are genuinely excellent — in my testing on a Node.js API workload, an m8g.4xlarge outperformed a comparably priced m7i.4xlarge by about 18 percent on throughput. Spot instances are the other reason AWS wins here. AWS Spot availability and interruption rates are still lower than Azure Spot VMs in most regions, and the Spot Advisor tool actually gives you useful interruption frequency data before you commit.

Containers — GCP Wins

Google invented Kubernetes. That heritage shows. GKE (Google Kubernetes Engine) Autopilot mode is still the most polished managed Kubernetes experience in 2026. It handles node provisioning, scaling, and bin-packing automatically, and the security posture out of the box is tighter than EKS or AKS without significant manual hardening. If your team runs containerized microservices and doesn’t want to babysit node pools, GKE is the answer.

Spot/preemptible pricing on compute, since we’re here: AWS Spot can run 70 to 90 percent below on-demand pricing. GCP Spot VMs run around 60 to 91 percent below on-demand, and GCP’s sustained use discounts — which apply automatically with no upfront commitment — add another 20 to 30 percent savings for instances running more than 25 percent of a month. Azure Spot VMs offer similar discounts on paper but interruption rates in popular regions like East US 2 are noticeably higher in practice.

Database — Winner by Engine

Probably should have opened with this section, honestly. For most companies, the database decision is the infrastructure decision. Compute is fungible. Your database isn’t.

PostgreSQL — AWS RDS or Aurora Wins

Aurora PostgreSQL is still the best managed Postgres offering available. The read replica lag is consistently under 10 milliseconds in healthy clusters, the storage auto-scaling is painless, and Aurora Serverless v2 — which scales in 0.5 ACU increments — handles bursty workloads elegantly. GCP’s AlloyDB has closed the gap significantly since 2024 and is genuinely competitive for analytics-heavy Postgres workloads. Azure Database for PostgreSQL Flexible Server is fine. It’s not exceptional.

SQL Server — Azure Wins (Obviously)

Azure SQL Managed Instance is the closest thing to running SQL Server on-premises without actually doing it. Point-in-time restore, SQL Agent, cross-database queries, CLR — all of it works. AWS RDS for SQL Server has gotten better but still lacks some enterprise features SQL Server shops rely on. This one isn’t a contest.

NoSQL — Depends on Your Pattern

DynamoDB remains the best managed key-value and document database for high-throughput, low-latency workloads. Sub-5ms reads at any scale, single-digit millisecond writes, and the on-demand pricing model means you’re not capacity planning. Firestore (GCP) is excellent for mobile and web applications that need real-time sync. Cosmos DB (Azure) is the most versatile — it supports multiple APIs including MongoDB, Cassandra, and Gremlin — but the pricing model is notoriously confusing and the RU/s provisioning concept trips up almost every team I’ve seen adopt it for the first time.

Serverless Databases

Aurora Serverless v2 and Neon (which many GCP teams now run on top of GCP infrastructure) dominate here. Azure SQL Serverless exists but the cold-start behavior is unpredictable enough that I wouldn’t put it behind a latency-sensitive API without careful warmup configuration.

AI/ML — Why GCP and AWS Lead and Azure Is Catching Up

Frustrated by Azure’s AI positioning for years, I finally ran a proper three-way comparison on training and inference costs in late 2025. Here’s what I found.

Training Workloads

GCP Vertex AI with TPU v5e access is still the best option for training large transformer-based models if you can write JAX or use frameworks that support TPU. A v5e pod with 256 chips runs around $2.40 per chip-hour on reserved pricing, and training throughput on standard LLM pretraining benchmarks is roughly 1.4x what you get from equivalent A100 clusters on AWS. The problem is TPU availability. It’s better than it was, but you’re often waiting days for capacity in us-central1 unless you’ve pre-purchased committed use contracts.

AWS SageMaker HyperPod with p5.48xlarge instances (H100 clusters) is the more reliable option for teams that need consistent GPU access on a schedule. The p5.48xlarge gives you eight H100 SXM5 80GB GPUs at around $98.32 per hour on-demand, or approximately $52 per hour with a 1-year reserved commitment. SageMaker’s training job management has matured — distributed training with FSDP across 32 nodes is genuinely less painful than it was two years ago.

Inference — GCP Wins at Scale

Google’s custom Axion and Trillium chips make inference economics compelling at volume. Running Llama 3.1 70B on GCP with Vertex AI serving on TPU v5e hits around $0.18 per million tokens at scale with optimized batch sizes. Comparable deployments on AWS with Inferentia2 run around $0.22 to $0.28 per million tokens. Azure’s inference pricing on NC A100 v4 series is higher still, though Azure OpenAI Service — which runs on Azure — is a separate pricing model entirely and often cheaper than self-hosted inference for standard models.

Azure ML — Genuinely Getting Better

Burnt by Azure ML’s instability in 2022 and 2023, I went back in late 2025 and found a much more stable platform. The Prompt Flow tooling for LLM application development is one of the better visual tools for chaining models and tools. Azure’s partnership with OpenAI gives it exclusive access to GPT-4o fine-tuning capabilities that neither AWS nor GCP can match. If your ML work centers on Microsoft’s model ecosystem or you’re already in Azure, the gap has closed enough that migrating out purely for ML doesn’t make sense anymore.

Pricing — Who Is Actually Cheapest

List prices mean nothing. Here’s what real workloads actually cost after commitments, reserved instances, and sustained use discounts in 2026.

Workload 1 — Web application backend, 3-tier, 500 req/sec sustained: 4x application servers (8 vCPU, 32GB RAM), managed PostgreSQL with read replica, load balancer, 10TB egress monthly.

- AWS (m7g.2xlarge reserved 1-year, Aurora PostgreSQL r7g.xlarge) — approximately $2,800/month

- Azure (D8s_v5 reserved 1-year, Azure Database PostgreSQL Flexible) — approximately $3,100/month

- GCP (n2-standard-8 with sustained use discount, Cloud SQL for PostgreSQL) — approximately $2,650/month

GCP wins this one, largely on sustained use discounts applying automatically to the compute tier.

Workload 2 — Windows-based enterprise app, SQL Server, 200 concurrent users:

- AWS (r6i.4xlarge, RDS SQL Server SE, no BYOL) — approximately $8,900/month

- Azure (E16s_v5 with Hybrid Benefit, Azure SQL MI General Purpose) — approximately $3,400/month

- GCP (n2-standard-16, Cloud SQL SQL Server) — approximately $6,200/month

Azure wins by a landslide. That $5,500/month difference is real money. I’ve seen companies run their entire Azure environment essentially subsidized by SQL Server and Windows Hybrid Benefit savings.

Workload 3 — ML training, 100 GPU-hours per week, A100-class:

- AWS (p4d.24xlarge Spot, approximately $9.83/hr spot) — approximately $4,000/month

- Azure (NC96ads A100 v4 Spot) — approximately $4,400/month

- GCP (a2-highgpu-8g Spot with preemptible) — approximately $3,600/month

GCP edges this one, but availability variability makes it less reliable as a plan. In practice I recommend AWS for this workload because the Spot interruption rates are more predictable and SageMaker Managed Spot Training handles checkpointing automatically.

Egress costs remain a genuine headache across all three providers. All charge $0.08 to $0.09 per GB after the first 100GB free monthly. If your workload moves large volumes of data out to end users or between clouds, that line item will shock you. Committed egress deals are available at enterprise scale but you need to ask — they don’t advertise them.

The Verdict — Match Your Stack to the Right Cloud

Eight years of doing this has taught me one thing: the worst cloud choice is the one that doesn’t match your team’s actual skill set and vendor relationships.

Choose GCP if: You’re a Python and ML-first shop, you’re building on Kubernetes, or your primary workload is data analytics at scale. BigQuery alone is reason enough for some data teams. GCP’s pricing model rewards teams that run workloads consistently, and the developer tooling — especially around containers and data pipelines — is excellent. The tradeoff is a smaller partner ecosystem and fewer enterprise support options compared to AWS.

Choose Azure if: You’re a Microsoft shop. If you run Active Directory, significant SQL Server infrastructure, have existing Enterprise Agreements, or your developers live in Visual Studio and Azure DevOps, Azure is the rational choice. Don’t fight your existing licensing and tooling investment. The Hybrid Benefit savings are real and the integration story is genuinely good now.

Choose AWS if: You’re building anything else. Startups. SaaS. Multi-region consumer applications. Complex data pipelines that mix managed services. Anywhere you need the broadest selection of managed services and the deepest global region footprint. AWS has 34 geographic regions to Azure’s 60-plus availability zones and GCP’s 40 regions — though Azure and GCP have narrowed the gap. The AWS partner and talent ecosystem remains larger than the other two combined, which matters when you’re hiring or looking for specialized consulting help.

One last thing I get wrong every time I talk about this: treating these as permanent choices. Most companies of any real scale run at least two clouds by 2026. Picking a primary doesn’t mean you’re locked in. Pick the one that matches your dominant workload type, build with provider-agnostic patterns where it matters, and revisit the decision when your workload profile changes. That’s it. That’s the whole framework.

Stay in the loop

Get the latest multicloud hosting updates delivered to your inbox.