Multi-Cloud Security Has Gotten Complicated With All the Competing Identity Systems Flying Around

As someone who has spent eight years watching teams migrate to multi-cloud setups, I learned everything there is to know about the blindspots that keep appearing. Today, I will share it all with you.

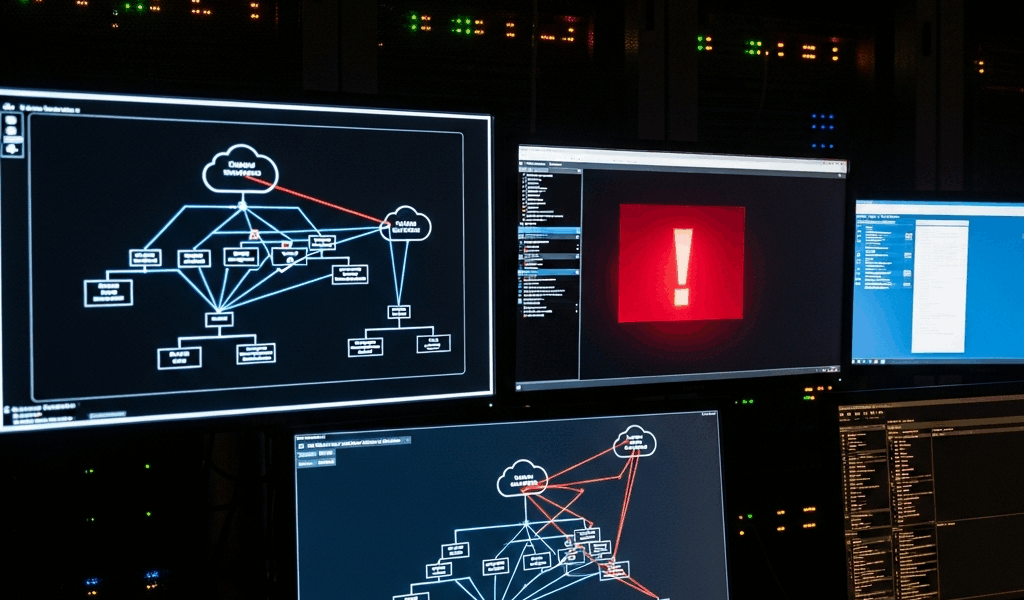

The gap isn’t complexity. It’s identity. AWS uses IAM roles. Azure uses managed identities and service principals. GCP has Workload Identity Federation. Each system speaks a different dialect of “who are you and what can you do?” — and that dialect problem is where attackers live.

Run one cloud and your security team knows the language. They understand how a role trust policy works in IAM. They know what a condition key does. They know the blast radius when something goes sideways. Add a second cloud and that certainty evaporates almost immediately. The seams between clouds — where one system hands off to another — become the exact places attackers probe first. A permission that looks locked down in AWS might translate to an overly broad grant in GCP. A deny rule that works in Azure might not exist at all somewhere else.

This isn’t hygiene advice. Not frameworks. The actual failure modes — the ones that show up in post-mortems.

So, without further ado, let’s dive in.

IAM Roles That Work in One Cloud But Grant Too Much in Another

Probably should have opened with this section, honestly. Federated identity is where most multi-cloud breaches start — and it’s the gap that shows up in post-mortems more than any other.

But what is federated identity risk? In essence, it’s what happens when cross-cloud trust relationships don’t carry the same permission constraints across both sides. But it’s much more than that.

Here’s what I see happen repeatedly. A team creates an AWS IAM role called cross-cloud-deployer. The role has permissions to assume roles in GCP and Azure. In AWS, this role is scoped tight — maybe it can only run inside a specific VPC, only during certain hours, only from a specific IP range like 10.0.4.0/24. Looks solid.

Then that role gets assumed by a GCP service account running in a pod. GCP doesn’t understand AWS condition keys. It doesn’t know about the IP range restriction or the time-based constraint. From GCP’s perspective, the role is simply “can assume this other role.” Full stop. So an attacker who compromises the GCP workload can assume that AWS role without hitting any of the original restrictions — because those restrictions only exist inside AWS’s enforcement engine.

This isn’t hypothetical. I watched a security team catch this exact pattern when they found lateral movement logs showing a GCP service account assuming an AWS IAM role, then using that role to export a database snapshot to an S3 bucket the attacker controlled. The AWS logs showed the snapshot export. The GCP logs showed the role assumption. Neither team owned both log sets, so it took three weeks to connect the dots. Three weeks.

The problem compounds with Azure AD app registrations. Federate Azure AD with AWS using OIDC or SAML and you’re creating a trust relationship that interprets Azure AD groups as AWS IAM principals. Azure AD group membership is dynamic. Role assignments flow through admin consent workflows. But when Azure AD hands off to AWS, AWS treats the token as a static set of claims — a snapshot with no memory of how those claims got there. An attacker who adds themselves to an Azure AD group that’s federated to a high-privilege AWS role inherits that role without triggering any of the approval workflows that would normally catch the lateral move inside Azure itself.

The fix isn’t to avoid federation. Audit what each cloud actually sees when the handoff happens. Document the exact permission grant that results on each side. Test it. Then test it again whenever someone adds a new cloud or modifies the trust policy. Don’t make my mistake — I assumed the AWS-side restrictions would propagate. They didn’t. They never do.

Logging Blind Spots When Clouds Don’t Talk to Each Other

CloudTrail in AWS captures API calls. Azure Monitor Logs captures resource operations. GCP Cloud Audit Logs captures both admin and data access events. They all record similar information. Different schemas, different timestamps, different formats for the same basic concepts. That’s what makes multi-cloud logging so frustrating for defenders.

When you don’t centralize these logs, lateral movement across clouds is essentially invisible. An attacker moves from AWS to GCP. The AWS logs show credential exports from Systems Manager Parameter Store. The GCP logs show a new service account being created, then used to read sensitive data. Those logs sit in separate places, owned by separate teams — and no one connects them for weeks.

I’m apparently drawn to fintech horror stories, and this one is real. A company running workloads across AWS, GCP, and Azure had CloudTrail configured. Cloud Audit Logs configured. They were even shipping some logs to a centralized SIEM — a Splunk deployment that cost them around $180,000 annually. But cross-account AWS logs weren’t being aggregated. GCP logs were going to a separate Cloud Storage bucket, gs://infra-audit-logs-prod, that wasn’t connected to Splunk. Azure logs were sitting in a Log Analytics workspace that was set up but not indexed for cross-system queries. When an insider exfiltrated customer records, they moved the data from an AWS RDS snapshot to a GCP bucket they created, then downloaded it from there. Visible in all three log systems. Took two weeks to find it because nobody was running AWS-to-GCP queries.

The technical path forward: CloudTrail logs go to S3. GCP Cloud Audit Logs go to Cloud Logging with a log sink. Azure sends everything to a Log Analytics workspace or a storage account. All three feed into a centralized aggregation layer — Chronicle, Splunk, Sentinel, or a custom pipeline built on something like Pub/Sub and Kafka. Datadog and Sumo Logic handle this too, though at scale you’re looking at $50,000-plus annually before you’ve normalized anything.

Aggregation alone isn’t enough, though. You need schema normalization so you can actually run cross-cloud queries. An AWS API call and a GCP API call are not the same format. Timestamp precision differs — AWS CloudTrail uses millisecond-precision ISO 8601, GCP uses nanosecond-precision RFC 3339. Principal names format differently. Without normalization you end up with logs you technically possess but can’t actually search when it matters.

Policy Drift When the Same Rule Means Different Things Per Cloud

AWS Service Control Policies are genuinely powerful. Write a policy that denies data exfiltration across the entire organization — something like “deny s3:PutObject to any bucket outside our approved list” — and it holds. Solid control. Blocks a common attack path.

Then you need the same control in Azure. So you write an Azure Policy that denies storage account access outside your approved list. Feels equivalent. Isn’t.

Azure Policy works at the resource level. AWS SCPs work at the principal level. That distinction matters enormously. When an AWS SCP denies something, the entire organization is blocked — no exceptions without explicitly modifying the SCP. When an Azure Policy denies something, exemptions exist, scope overrides are possible, and permission boundaries can create gaps the policy author never intended. The syntax is different. The enforcement model is different. The audit trail is different. That’s what makes policy drift so dangerous to teams maintaining both environments simultaneously.

A real example: A team wrote an SCP to deny all s3:GetObject calls to buckets outside their AWS organization. Then they wrote what they believed was a matching Azure policy. The Azure policy had a single typo — it denied Microsoft.Storage/storageAccounts/read instead of Microsoft.Storage/storageAccounts/blobServices/containers/blobs/read. The policy looked equivalent in a five-minute review. It wasn’t. An attacker could enumerate blobs in unauthorized storage accounts because the correct resource type wasn’t blocked — even though the AWS side was locked down tight.

Built separately, tested separately, assumed equivalent. That assumption kills multi-cloud security programs. I’ve watched it happen at three different organizations over the past four years.

Infrastructure-as-code for policies catches this. Terraform, Pulumi, or even CloudFormation lets you define policy intent once and generate the correct syntax per cloud. Not perfect — you still need to understand each cloud’s policy engine deeply — but it surfaces version skew and makes policy changes visible across all environments at once, not sequentially after something breaks.

How to Find These Gaps Before They Become Incidents

Most teams discover these gaps during incident response. The goal is finding them on a boring Tuesday afternoon instead.

While you won’t need a full red team engagement to uncover these issues, you will need a handful of structured review processes — at least if you want to catch problems before attackers do.

Quarterly IAM cross-cloud review

Run a report showing every federated identity relationship and every cross-cloud trust policy. Print it out — physically, on paper. Review it. Look at what each trust relationship grants on each side. Document why it exists. Can’t justify it? Delete it. Four hours per quarter. Catches roughly 80% of creeping privilege problems before they compound.

Centralized log aggregation audit

Verify that CloudTrail, Cloud Audit Logs, and Azure Monitor are all shipping to a single ingestion point. Then run a test: make one API call in AWS, one in GCP, one in Azure. Verify all three appear in your central system within five minutes. One doesn’t show up? Fix it immediately. Do this monthly — not quarterly, monthly. Log gaps grow fast.

Policy equivalence review

For every sensitive control — data exfiltration prevention, encryption enforcement, network isolation — document the equivalent policy in each cloud. Run dry-runs. Test them. Sign off that they’re actually equivalent, not just structurally similar. Update the documentation whenever a policy changes. Automate this with IaC wherever possible.

Blast radius testing

Assume one cloud is compromised. Can an attacker reach the others? Test the exact attack paths described above — assume a federated role from one cloud and see what’s accessible in another. Try to move data across cloud boundaries. See if your logs catch it within a reasonable detection window. Fix whatever doesn’t work before someone else finds it first.

These gaps don’t require sophisticated fixes. They require consistency and attention — two things that are surprisingly rare in multi-cloud environments where ownership is fragmented across three different platform teams. The cost of ignoring them is a breach that takes weeks to detect and months to fully understand. The cost of catching them early is a few afternoons of genuinely boring security work. I know which I’d choose.

Stay in the loop

Get the latest multicloud hosting updates delivered to your inbox.